AI agentic automation brings the promise of enormous efficiency and productivity gain. The desired characteristics of AI agent systems are:

Autonomy -- the freedom to decide what to do

Agency -- the freedom to act without seeking approval

In a business environment, there is an additional essential requirement. Process workflows need reliable behavior -- predictable, consistent, compliant, and transparent. We know however that AI agents are based on AI large language models (LLMs), that these models are probabilistic, and can make mistakes. This makes it challenging to apply AI agents to business workflows.

Thunk.AI is the AI agentic automation platform that uniquely focuses on and excels at AI Reliability. One measure of the Thunk.AI platform end-to-end reliability is captured by the Hi-Fi Reliability Benchmarks:

AI Reliability for Enterprise Document Workflows

A closer look at our September 2025 benchmark implementation, achieving an industry-leading 97.3% AI Fidelity score.

Read full story

AI Reliability for IT Service Management

See how we achieved an industry-leading 99% AI Reliability score in our February 2026 benchmark implementation.

Read full story

A business workflow has to run repeatedly in similar but somewhat different contexts and with somewhat different inputs. There are four expectations of a reliable workflow in such an environment:

It achieves a desired outcome in each instance.

It follows a prescribed process in each instance.

To the extent the environments and inputs vary and to the extent the prescribed process doesn't specify what to do, it makes intelligent decisions as appropriate.

It automates and executes its decisions in a transparent way

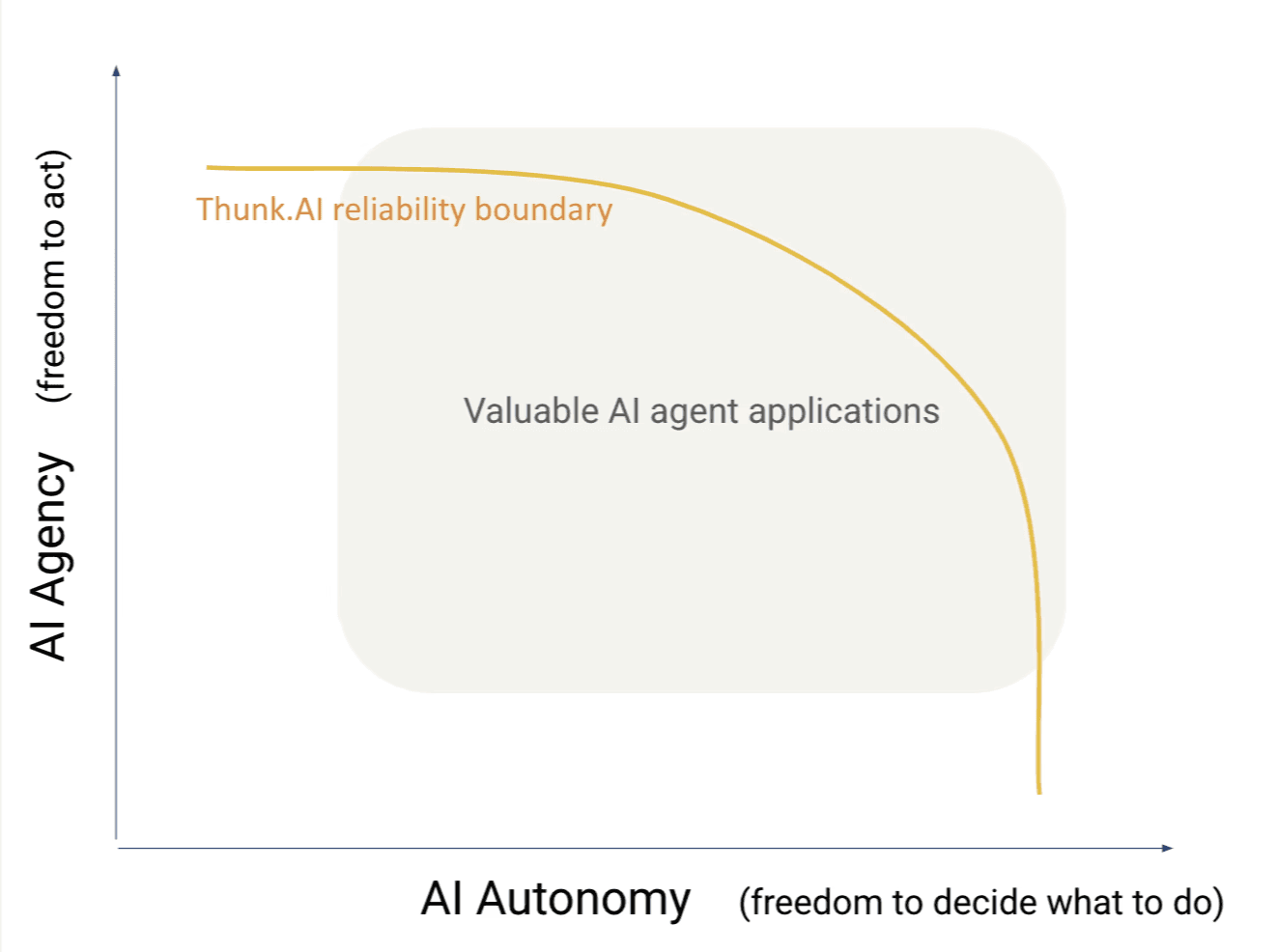

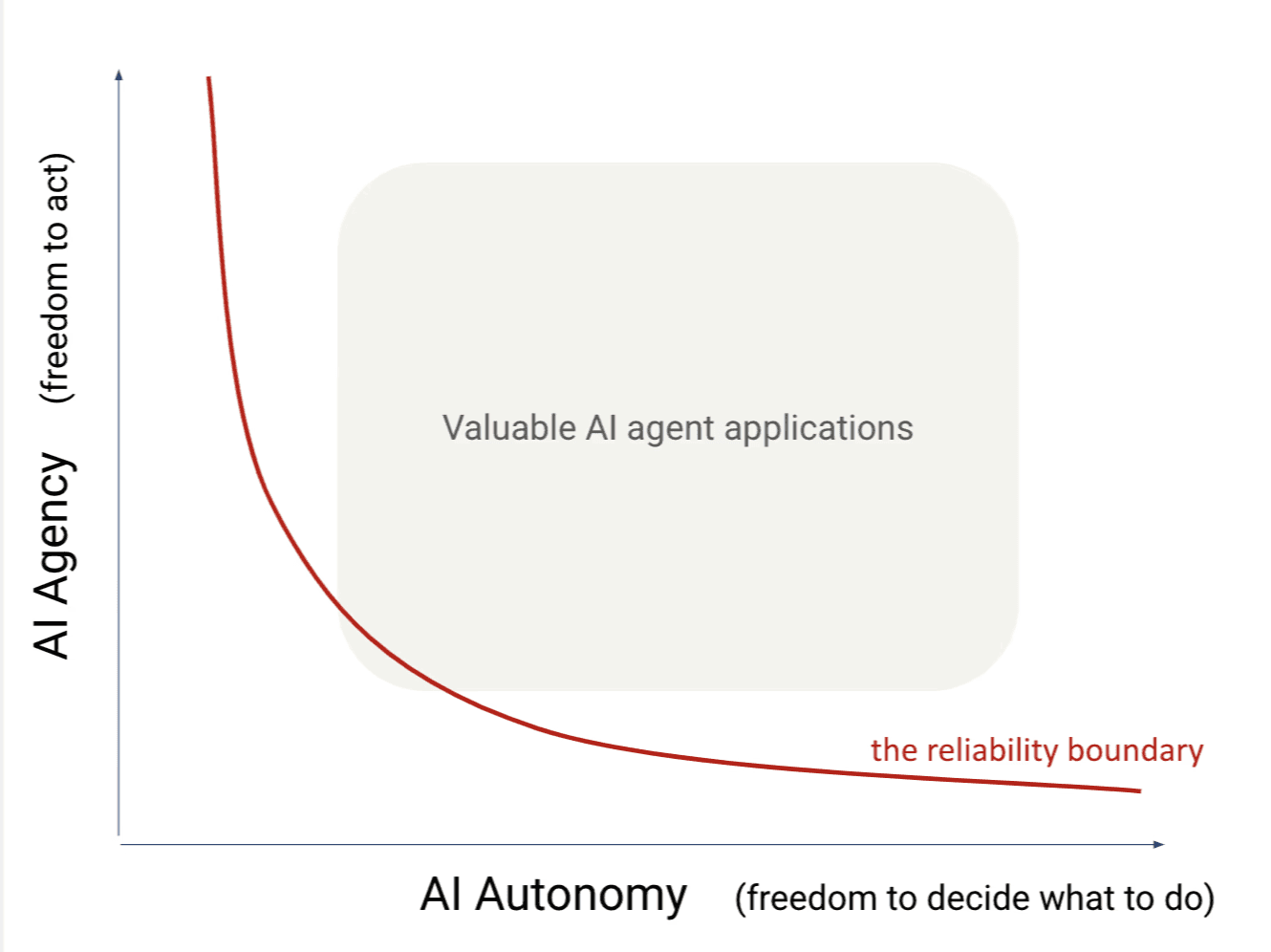

There are inherent tradeoffs between reliable behavior and the degree of AI agency/autonomy. Pragmatic business customers have adapted to the limitations of AI agentic platforms by either building applications with limited autonomy (very deterministic decision-making, using traditional workflow engines like n8n) or by building applications with limited agency (rich chatbots that analyze information and present answers to humans who then have to vet it and decide whether and how to act on it). With most AI agent platforms and implementations, this "reliability boundary" as shown below excludes most high-value business workflows.

Many vendors have pitched “engineering” approaches to the problem of AI reliability. In 2024, the conventional wisdom was to focus on “prompt engineering” — the somewhat mystical art of rewording natural language instructions in a way that would coax AI models to follow the desired intent. As this approach failed to achieve AI reliability for agents, there was a growing realization that the “context” presented to the AI model was at least as important. The context includes the examples, the data, the message history, and the tools that the AI model can invoke. In 2025, it became conventional wisdom to describe this as “context engineering” – the equally mystical art of dynamically shaping the context of each instruction sent to an AI model.

These unscientific and unscalable “engineering hacks” are not a sound basis for AI reliability. Further, they require deep technical teams, constant tuning and adjusting to react to small changes in requirements or policies, and contribute significantly to cost, delay, and lack of business ROI. Instead, a set of core principles need to be applied.

The Thunk.AI platform expands the reliability boundary and makes it possible (and easy!) to automate a broad class of high-value business processes with AI agentic automation.